My first software product took eleven months to build. A project management tool for construction companies. I interviewed maybe a dozen people in the industry, sketched wireframes for weeks, then spent the better part of a year writing code with a small team. We launched with 40+ features, a polished UI, and a pricing page that we’d A/B tested three times.

We got 12 signups in the first month. Four of them were friends. By month three, we had 23 paying customers and a burn rate that made the numbers obviously unsustainable. We shut it down.

The second product — an inventory tracker for e-commerce sellers — took eight months. Same pattern. Built too much, launched too late, discovered that the market wanted something different from what we’d assumed. Another shutdown.

The third product worked. Not because I suddenly became a better developer or found a better market. It worked because I stopped building finished products and started building the smallest possible thing that could test whether anyone cared.

The Expensive Mistake Most Founders Make

There’s a specific trap that technical founders fall into. You have the skills to build, so you build. Requirements feel clear in your head, the architecture makes sense, and each feature seems obviously necessary. Six months later you have a product that’s technically impressive and commercially irrelevant.

The problem isn’t engineering. It’s the gap between what you think users need and what they’ll actually pay for. That gap can only be closed by putting something real in front of people early — not a pitch deck, not a landing page with an email signup, but a working piece of software that solves one specific problem.

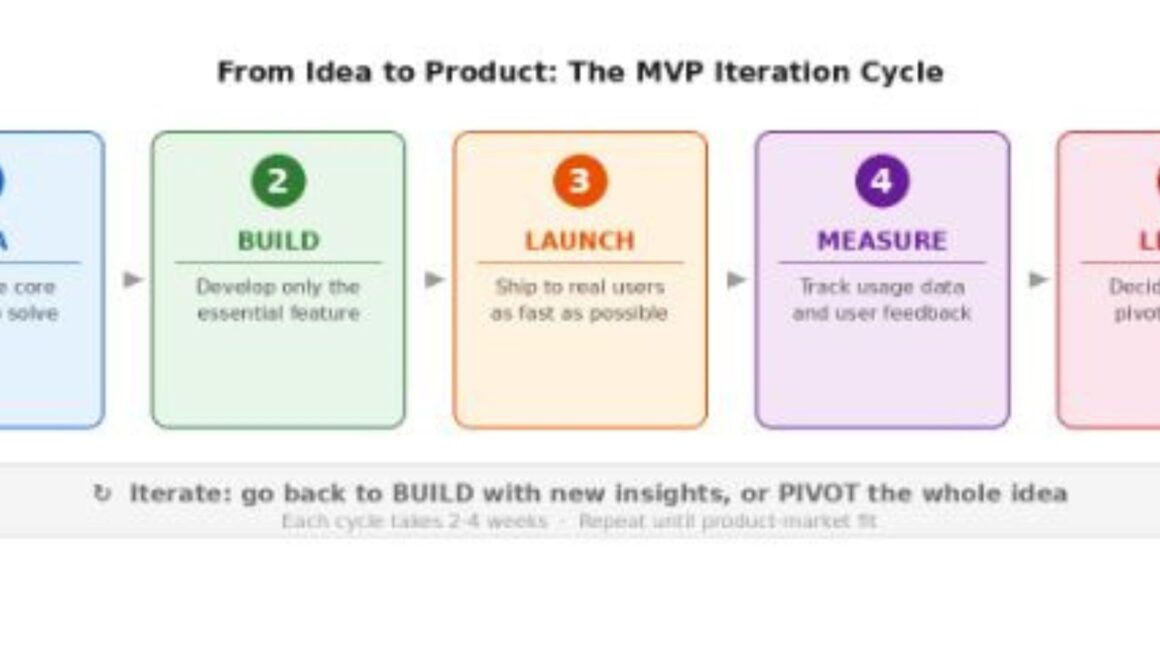

If you’ve spent time around startup methodology, you’ve probably encountered the question what does MVP stand for in business. The concept — Minimum Viable Product — gets thrown around a lot, but most people misunderstand what “minimum” actually means in practice. It doesn’t mean a half-broken prototype. It means the smallest version of your product that delivers real value to a real user and generates real feedback.

What My Third Product Looked Like at Launch

The Idea

A scheduling tool for freelance consultants. I’d been freelancing myself and noticed that every consultant I knew was juggling Google Calendar, a spreadsheet for availability, and email chains with clients trying to find meeting times. The friction was obvious.

What I Didn’t Build

No client portal. No invoicing integration. No team calendars. No mobile app. No custom branding. All of these were on my original feature list, and every single one of them felt essential when I wrote it down.

What I Actually Shipped

One page where a consultant could set their available hours. One shareable link that clients could click to book a slot. An email confirmation for both parties. That’s it. The whole thing took three weeks to build.

I sent the link to 15 consultants I knew from freelancing communities. Eight of them used it within the first week. Five of them sent it to their own clients. Within a month I had real usage data showing exactly which parts of the workflow mattered and which features people were actually asking for — and they were different from what I’d originally planned.

The Feedback Loop That Changes Everything

The cycle above is simple on paper: build something small, ship it, watch what happens, learn, repeat. What makes it powerful is speed. Each iteration takes 2-4 weeks instead of 6-12 months. That means you can be wrong five times in a row and still find product-market fit faster than someone who spends a year building their first version.

With my scheduling tool, the first iteration revealed that consultants didn’t care about the calendar UI at all. They cared about the booking link. The second iteration added time zone handling — something I hadn’t even considered, but three users asked about it in the same week. The third iteration introduced buffer time between appointments, which turned out to be the feature that made people switch from their existing tools.

None of these insights would have surfaced in a requirements document or a user interview. They came from watching real people use a real product.

How to Decide What Goes Into Your First Version

Start With One User, One Problem

Forget about market segments and personas. Pick one specific person — ideally someone you can talk to — and solve their most painful problem. If you can’t articulate the problem in one sentence, you’re not focused enough. “Freelance consultants waste 30 minutes per client trying to schedule meetings over email” is specific. “People need better productivity tools” is not.

Cut Until It Hurts, Then Cut More

Write down every feature you think you need. Now cross out everything except the one that directly solves the core problem. If your product doesn’t function without a particular feature, keep it. If it’s just “nice to have” or “users will expect it,” remove it. You can always add it in iteration two.

Set a Time Limit, Not a Feature Target

Give yourself 3-4 weeks for the first build. Whatever you can ship in that window is your MVP. This constraint forces brutal prioritization. It’s uncomfortable, and the thing you launch will feel incomplete. That’s correct. The goal isn’t a polished product; it’s a learning tool.

Signs Your MVP Is Working (And Signs It Isn’t)

Positive signals: Users come back without being reminded. They share it with other people. They complain about missing features — this means they care enough to want it to be better. They ask about pricing before you’ve even set one.

Warning signs: Users sign up but never return. They give polite feedback but don’t actually use it. Your usage graphs are flat. The features people request have nothing to do with your core value proposition — this means you’ve built the wrong thing, and adding features won’t fix it.

If you’re seeing warning signs, don’t add more features. Step back and question your assumptions about the problem itself. Sometimes the right move is to pivot the entire product direction based on what you’ve learned.

What I’d Tell My Past Self

That eleven-month construction project management tool? The core idea wasn’t bad. The scheduling feature inside it — about 5% of what we built — was the part users actually mentioned in feedback calls. If I’d shipped just that piece as a standalone tool in week four, I might have found the market signal without burning through half my savings.

Building software is addictive. Writing code feels like progress. But the only progress that matters before product-market fit is learning, and learning requires putting something imperfect in front of real users as fast as humanly possible. Ship small, ship early, and let the market tell you what to build next.

Frequently Asked Questions

How long should it take to build an MVP?

A well-scoped MVP typically takes 3-8 weeks to build, depending on technical complexity. If your initial build is taking longer than two months, you’re likely including too many features. The goal is to test your core assumption about the problem, not to build a complete product. Longer timelines increase the risk of building something nobody wants.

Can an MVP be too simple?

Yes, but the threshold is lower than most founders think. An MVP needs to deliver genuine value for at least one use case. It shouldn’t be a mockup or a landing page with no functionality. It should work — even if it only does one thing. If a user can solve their problem with your MVP and would be disappointed if it disappeared, you’ve hit the right level of simplicity.

Should I charge for my MVP from day one?

Not necessarily on day one, but introduce pricing earlier than feels comfortable. Free users behave differently from paying users. Someone willing to pay even $5/month for your tool is a much stronger signal of product-market fit than a thousand free signups. Many successful products start with a free beta period of 2-4 weeks, then introduce pricing to filter for genuine demand.

What if my MVP gets negative feedback?

Negative feedback on an MVP is valuable data, not a failure. It tells you which assumptions were wrong and gives you specific direction for the next iteration. The real failure is building for months in isolation and discovering at launch that nobody wants what you’ve made. Negative feedback in week three costs almost nothing to recover from. Negative feedback in month eleven can end a company.

Do I need a technical co-founder to build an MVP?

Not always, but you need some way to build working software. Options include no-code tools for simpler products, hiring a freelance developer for a focused sprint, or working with a development agency that specializes in MVPs. The key is keeping the scope tight enough that you can get to a working version quickly regardless of which path you choose.